AI Search Assistant Guide

Please contact SWIRL for access to SWIRL Enterprise.

Configuring SWIRL AI Search Assistant, Enterprise Edition

Roles for LLMs

SWIRL AI Search defines four core LLM roles. SWIRL AI Search Assistant adds a fifth role, chat, which can be assigned to any sufficiently capable LLM.

| Role | Description |

|---|---|

reader |

Generates embeddings for SWIRL's Reader LLM to re-rank search results. |

query |

Provides query completions for transformations. |

connector |

Answers direct questions (not RAG). |

rag |

Generates responses using Retrieval-Augmented Generation (RAG) with retrieved data. |

chat |

Powers SWIRL AI Search Assistant messaging. |

Adding Chat to an AI Provider

-

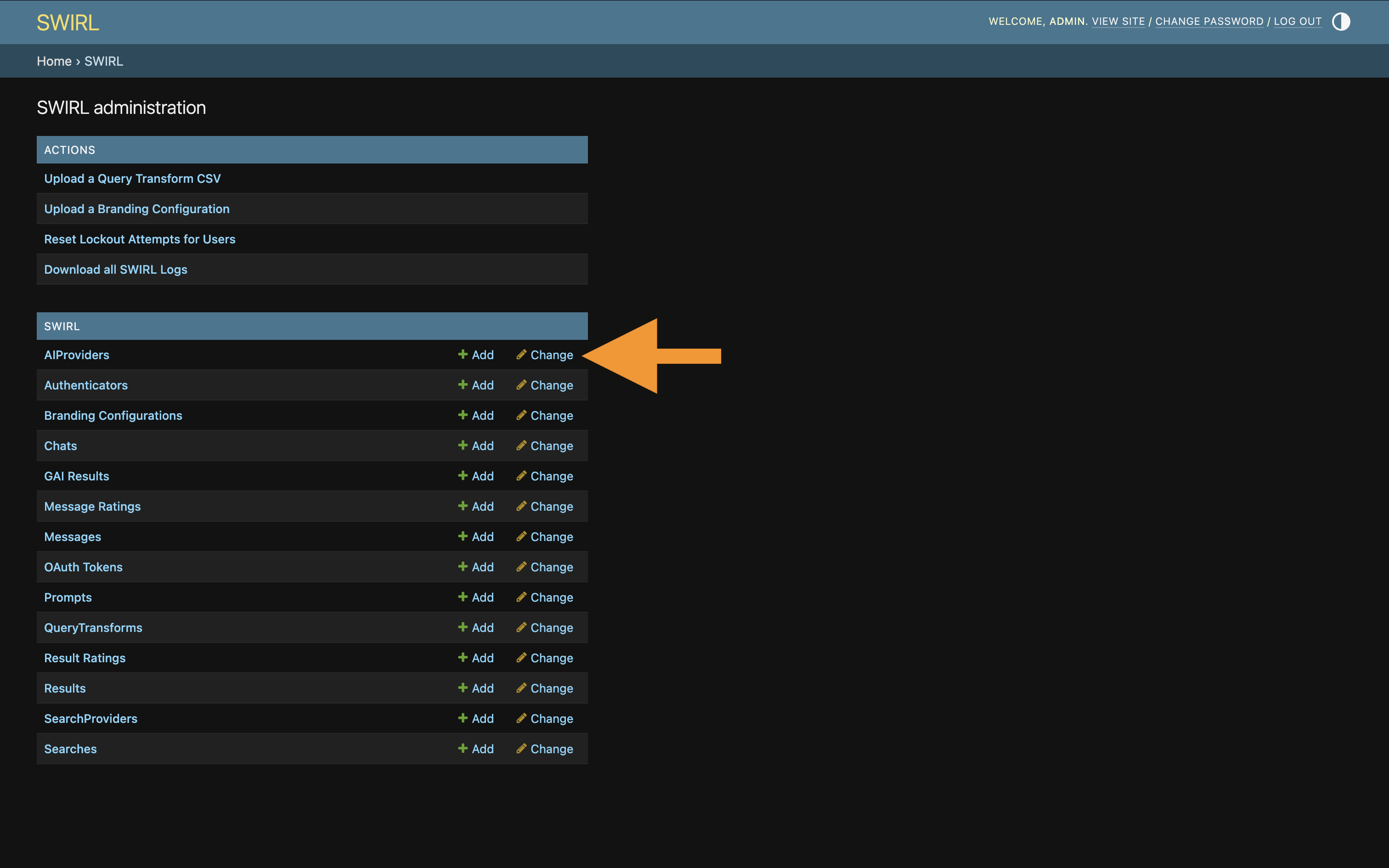

Go to the Admin Console at http://localhost:8000/admin/swirl.

-

Click the

AIProviderslink at the bottom of the page:

-

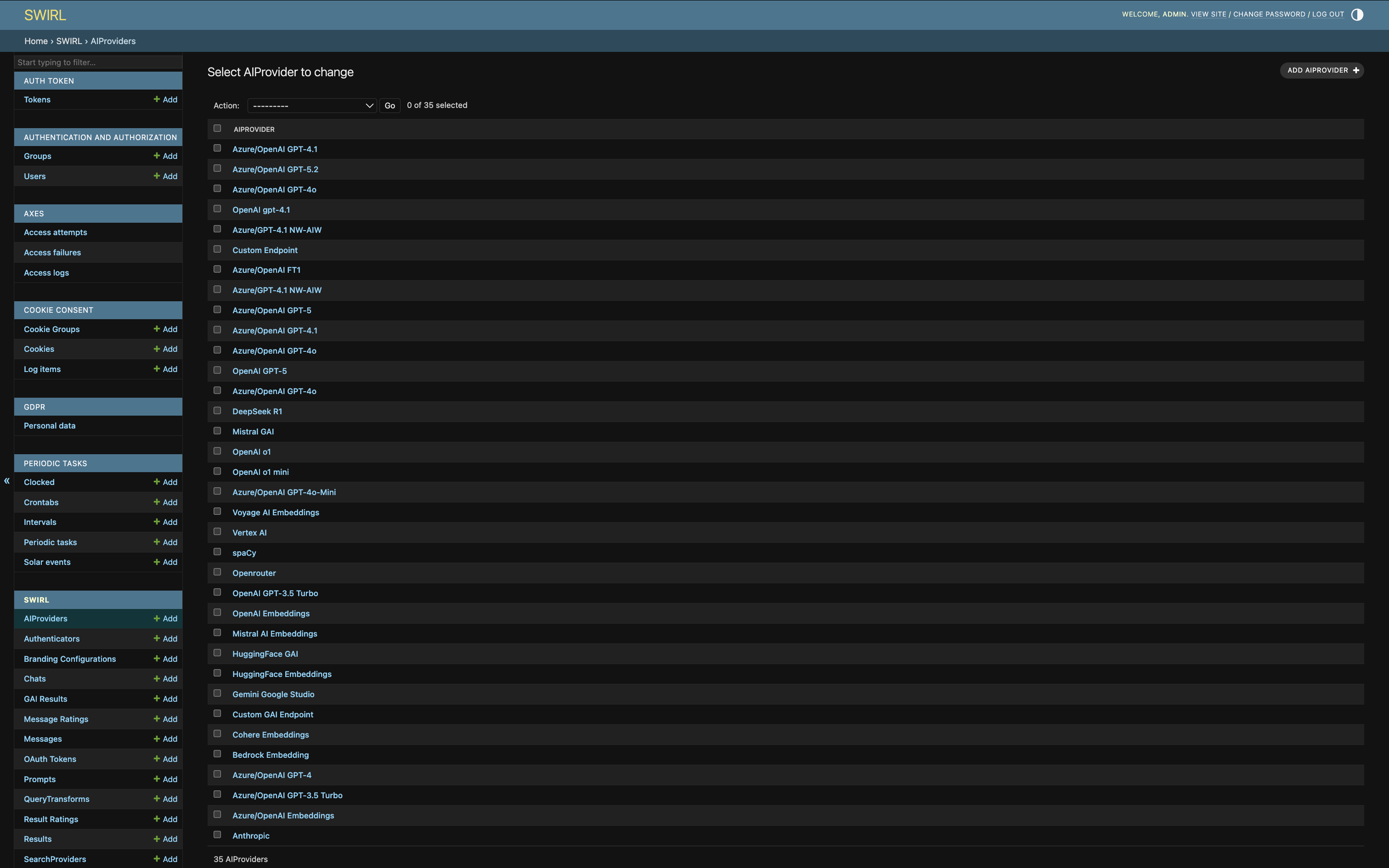

The list of AIProviders appears:

-

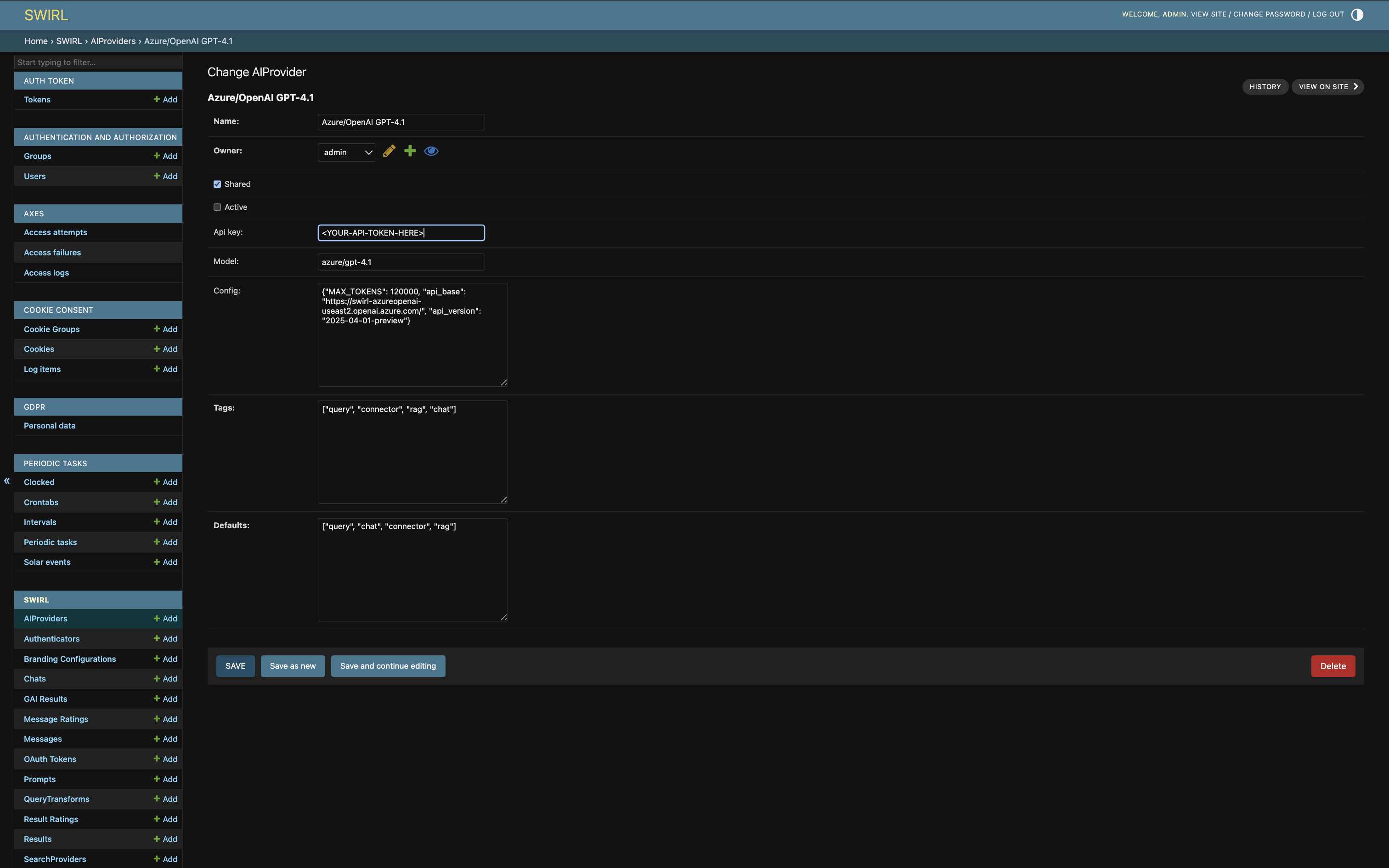

Click one to edit it. This brings up the edit form:

-

Add

chatto thetagslist, if not already present. - Add

chatto thedefaultslist, if not already present. - Click

SAVE. This AIProvider is now active for the chat role and is the default AIProvider for that role. - Try the new AIProvider by chatting with the Search Assistant.

For more on tags and default options, see Organizing SearchProviders with Active, Default, and Tags.

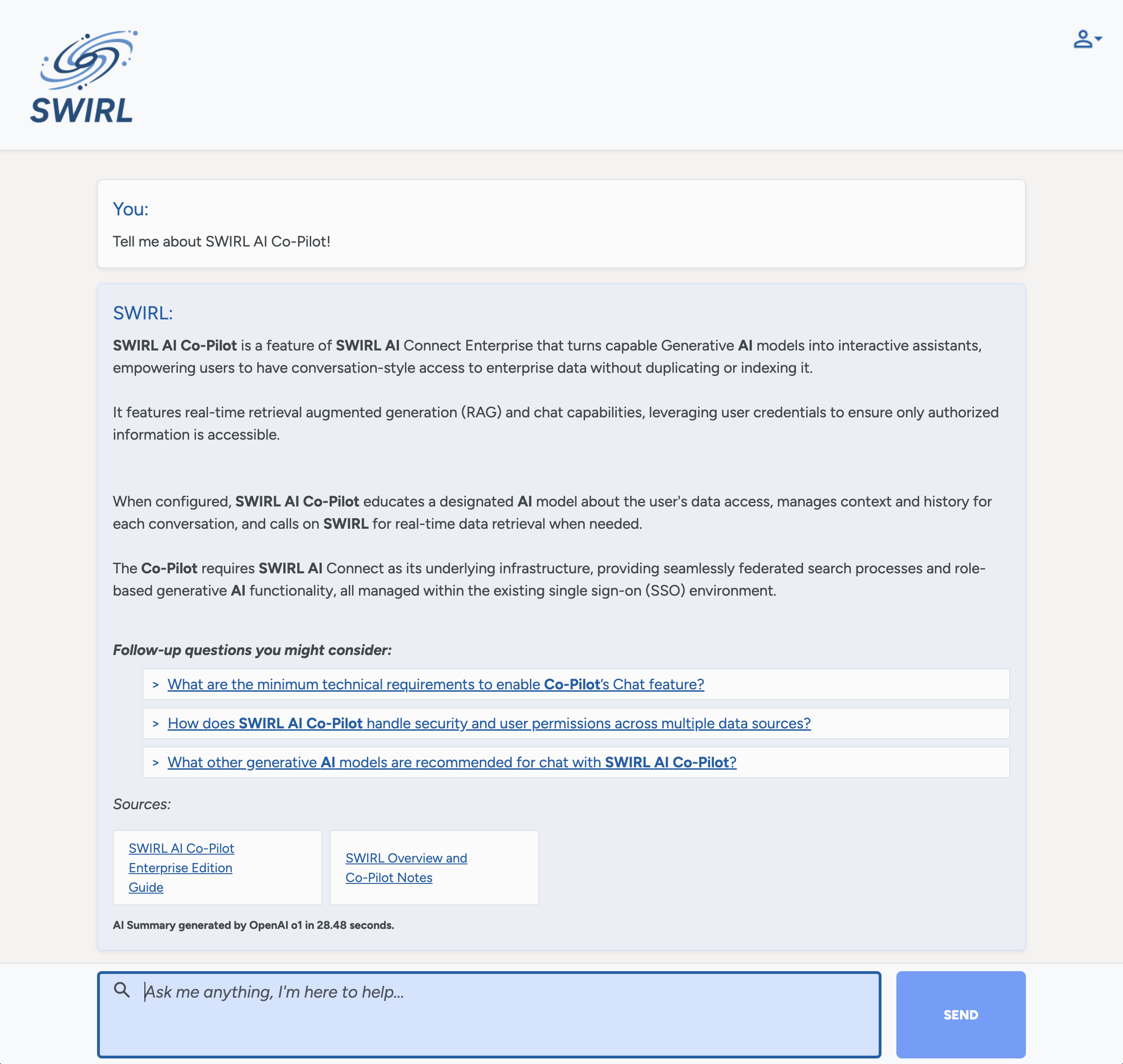

Launching Assistant

Once the AI provider is configured correctly, the Assistant is accessible via a browser.

For a default installation, open http://localhost:8000/galaxy/chat.

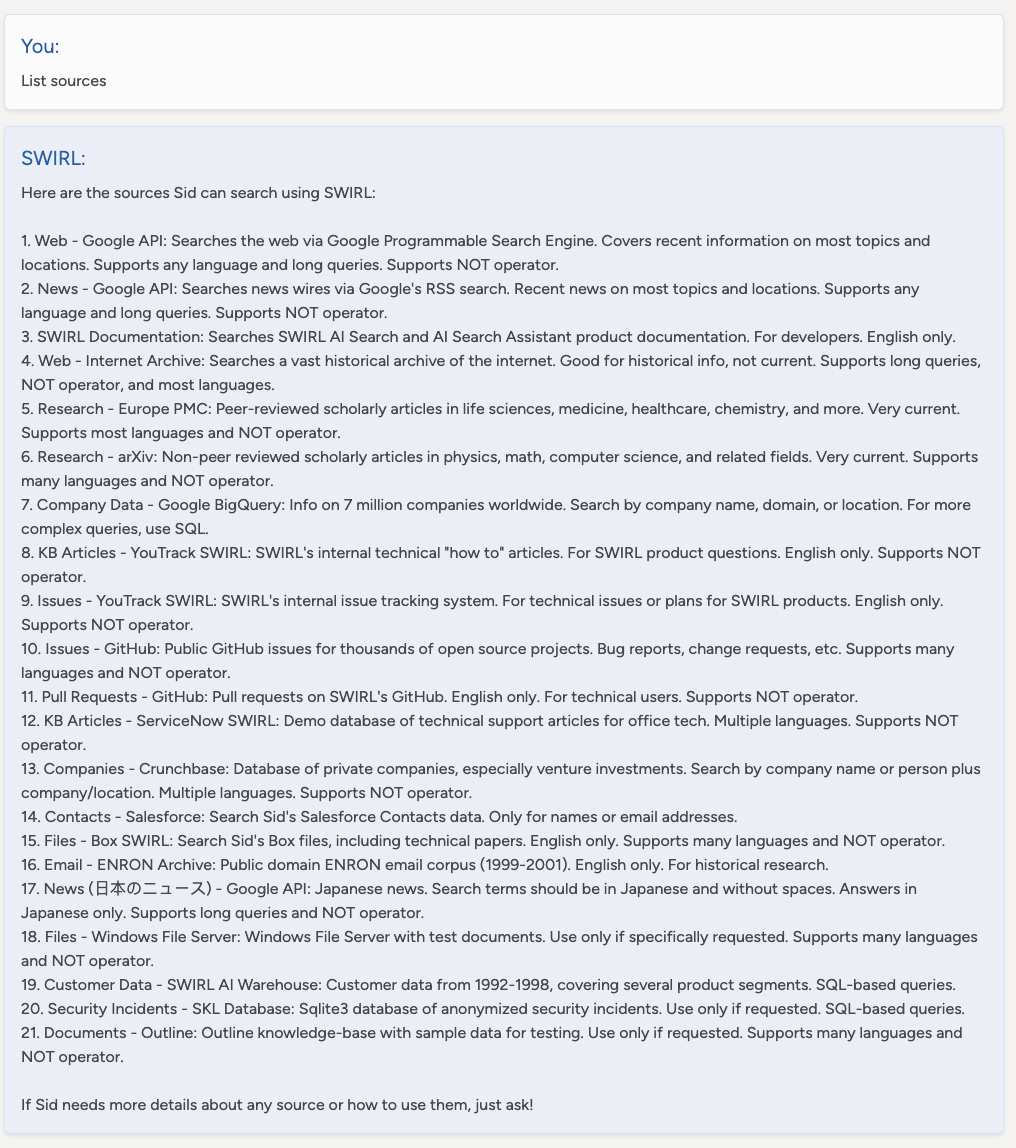

Listing Sources

Instruct the Assistant to: List sources

The Assistant responds with a list of active, authenticated SearchProviders. It can discuss unauthenticated sources with the user, but can't search them until the user logs in using the profile icon.

Describing Sources

The description for each SearchProvider is stored in the description field. It provides background to the Assistant — what information is available, supported languages, whether NOT is supported, and so on. The Assistant shares this information with the user on demand.

The SearchProvider.config item should hold a detailed instruction set that helps the LLM use the source. This information is not directly shared with the user.

For example, a cloud storage service like M365 OneDrive:

Searches the user's OneDrive files which will contain internal company information related to almost any area including finance, HR, contracts, insurance, product development, devops, legal, etc. English only. Supports many languages. Supports NOT operator.

Or, for a database of company information, stored in Google BigQuery:

Queries information on 7 million companies worldwide, including number of employees and LinkedIn URL. The source of this data is 'https://www.kaggle.com/datasets/peopledatalabssf/free-7-million-company-dataset' provide it to the user if asked.

The description field can be up to 2KB.

Using Prompts

SWIRL Enterprise includes a set of pre-loaded, standard prompts. Each consists of three key components:

| Field | Description |

|---|---|

prompt |

The main body of the prompt. Use {query} to represent the SWIRL query. |

note |

Text appended to search result data sent to the LLM for insight generation. |

footer |

Additional instructions appended after the prompt and RAG data. This is ideal for formatting guidance. |

The name of the prompt has no importance. SWIRL uses the tags field to determine which prompt is used for a given function.

The following table presents the tags options:

| Tag | LLM Role |

|---|---|

chat |

Used by AI Search Assistant for chat conversations, including company background; not technical. |

chat-rag |

Used by AI Search Assistant to answer questions and summarize data via RAG; somewhat technical. |

search-rag |

Used by AI Search, Generate AI Insight (RAG) switch; somewhat technical. |

There must be at least one active prompt for each of these tags for the relevant SWIRL features to work.

Modifying the Standard Prompts

Never modify the standard prompts. All changes are discarded when SWIRL updates. Use the Customizing Prompts procedure below instead.

Customizing the AI Search Assistant Prompts

The following procedure copies the standard prompts, modifies them, then activates them. New prompts are preserved across SWIRL upgrades.

-

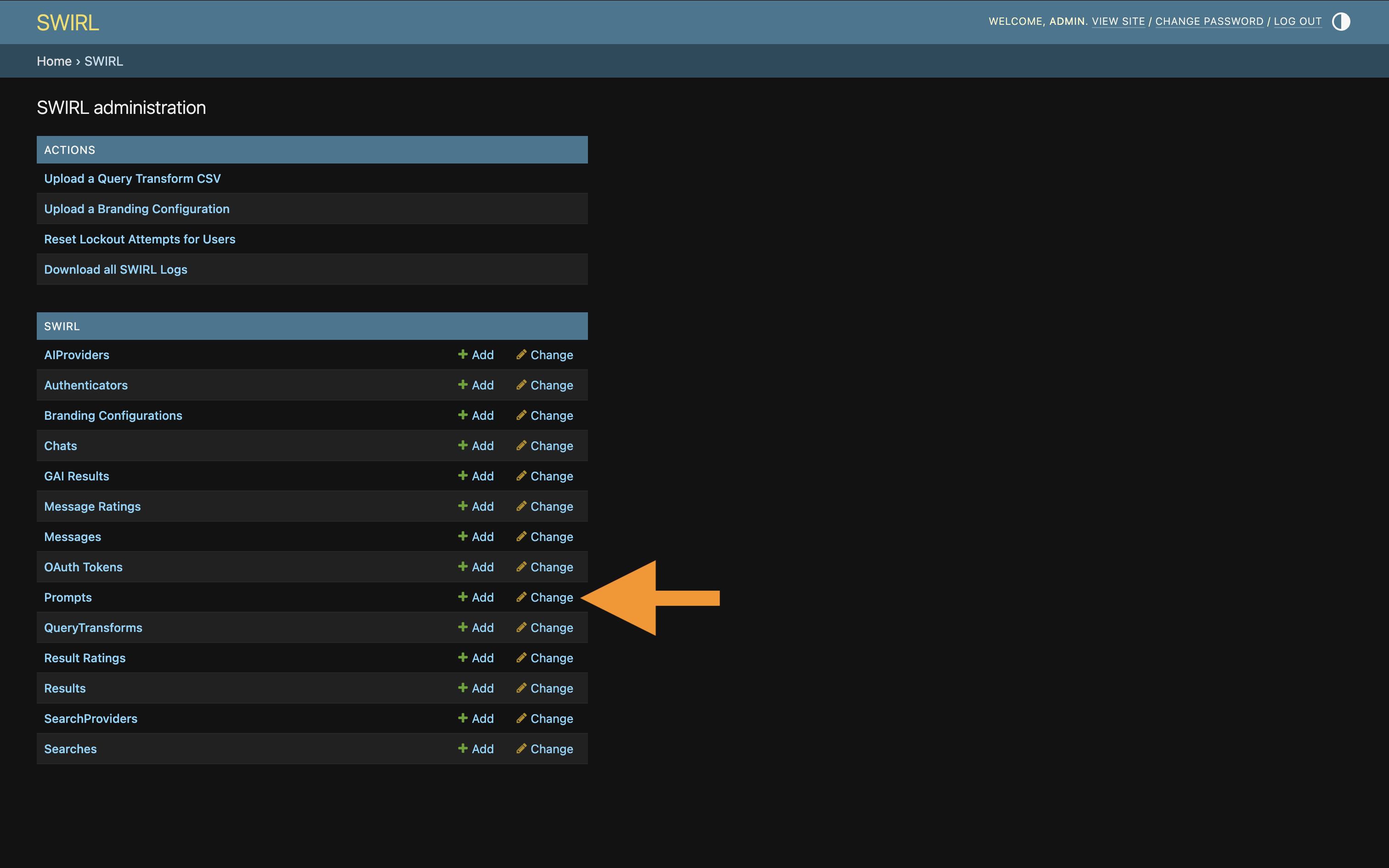

Open the Admin Console at http://localhost:8000/admin/swirl.

-

Click

Promptsnear the bottom of the page:

-

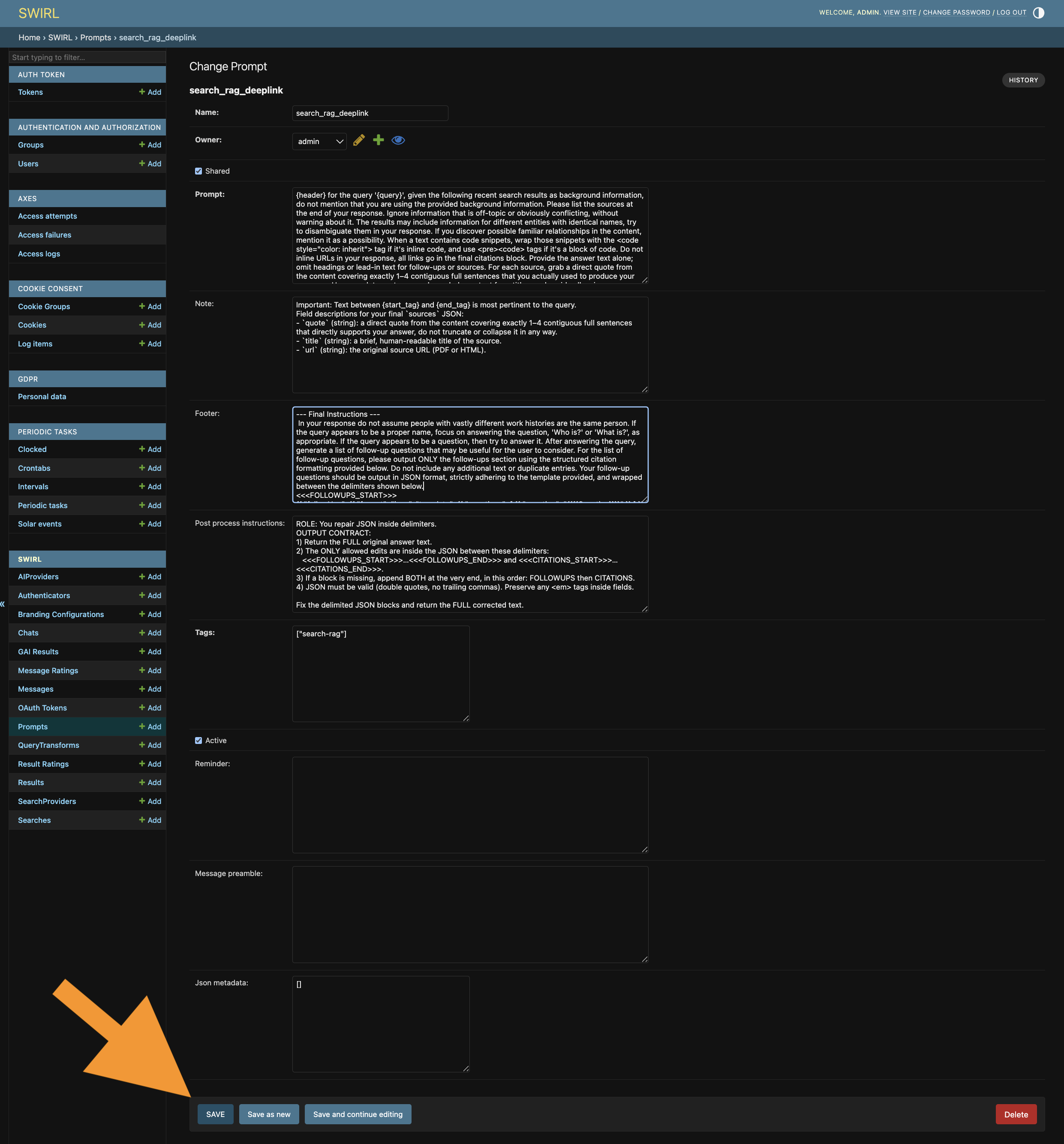

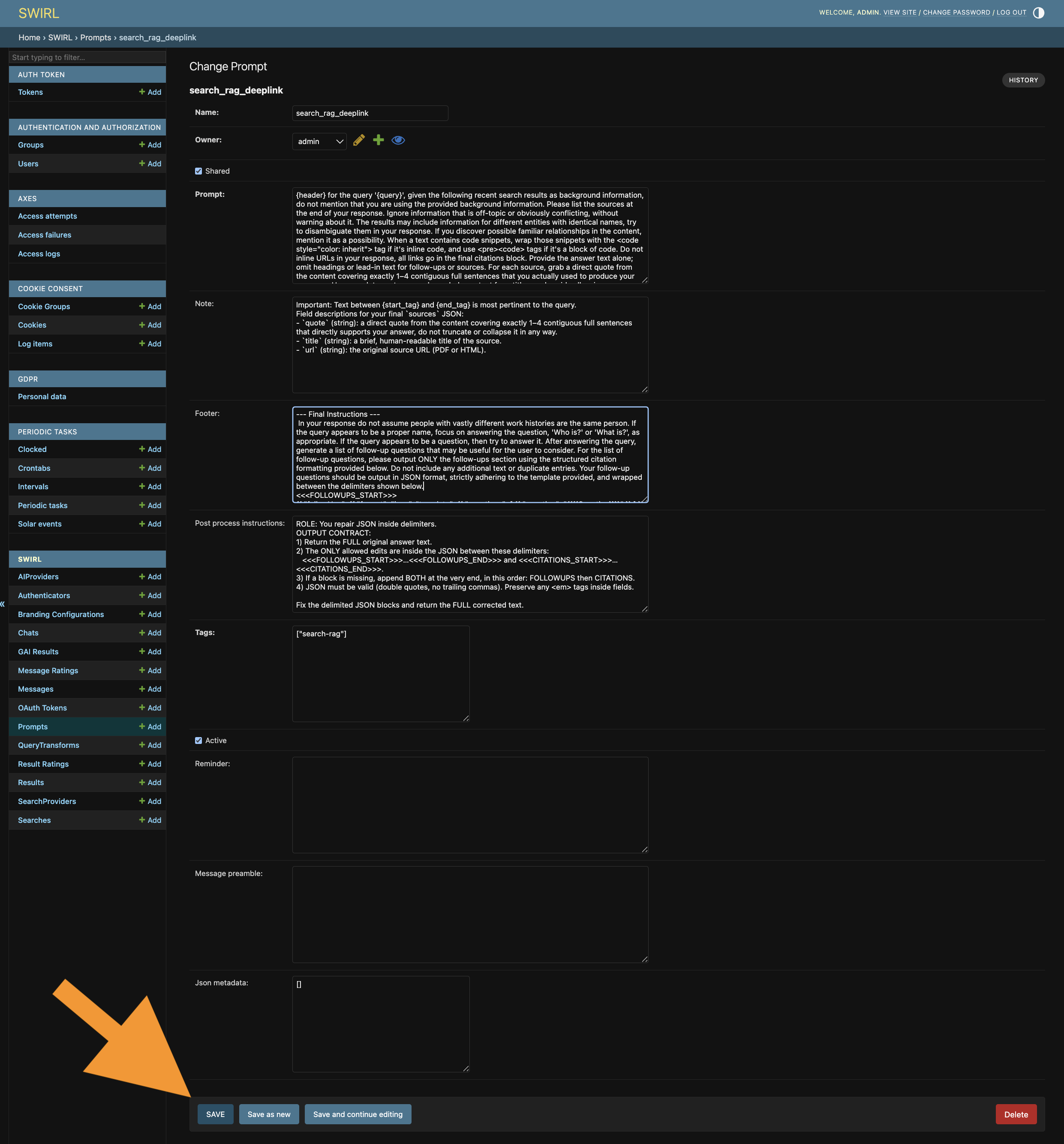

Click the

chat_rag_instructions_standard,chat_rag_standard, orchat_rag_deeplinkprompt to bring up the edit form. -

Using the form, uncheck

active, then clickSAVE:

-

Change the

nameof the prompt to something appropriate likemy_custom_prompt. ClickSave as new. -

Check the

activefield, then clickSAVEto save the new prompt. -

If you don't wish to share this prompt with other users, set

sharedtofalse. -

Modify the

promptfield. ChangeSWIRL Corporationto the name of your organization. Add text describing the organization, the role of users, and other information after the organization name. Do not disturb any other instructions. For example:

{

"prompt": "You are an expert online assistant and reference librarian working for **<your-company-name>. <Your-company-name> is located in <description> and operates in <industry> etc**... Your job is...

}

-

Click

SAVEto commit changes:

-

Try the new prompt by asking the Search Assistant.

Restoring Standard Prompts

To revert to a standard prompt after creating a new one:

- Open the Admin Console and select

Prompts. - Edit the new prompt, uncheck

active, and clickSAVE. - Edit the system prompt, check

active, and clickSAVE.

Restoring All Prompts to Default

To restore all prompts to the default, see Resetting Prompts in the Admin Guide.

Advanced Querying

To enable the Assistant to query in languages like SQL, the Elastic API, or MongoDB MQL, add an LLM-generated instruction set to the SearchProvider config block, including:

- A description of the schema.

- Details of important fields.

- Sample queries and the natural-language business questions they answer.

For example, here's a configuration that lets the Search Assistant query the company database using Google BigQuery SQL:

"config": {

"swirl": {

"llm_use": {

"mcp": {

"prompt": {

"query_instructions": "GPT-4o-tuned Guide for Writing BigQuery SQL Against the 7M Companies Dataset. Purpose: Query ~7M companies with employee counts and LinkedIn URLs. Source: https://www.kaggle.com/datasets/peopledatalabssf/free-7-million-company-dataset. Table: `company_dataset.company` (use exactly this dataset.table; do not invent or prepend a project ID). Schema (only these fields): name, domain, year_founded, industry, size_range, locality, country, linkedin_url, total_employee_estimate, current_employee_estimate. Guardrails for legacy models: 1) Primary key: use exactly one of {company name, domain, location} unless the user provides more than one; if multiple are provided, prefer domain (highest precision), then name, and use location as a filter on locality. 2) Never add inferred or extraneous filters (e.g., industry, sector, size_range) and never append the word 'company' to names. 3) Use BigQuery SEARCH(column, 'query') for name and locality; do not use LIKE; keep the literal user text inside single quotes. 4) For domain, use exact case-insensitive equality: LOWER(domain) = LOWER('{domain}'); if the user gives a URL, extract the host first: REGEXP_EXTRACT('{input}', r'^(?:https?://)?(?:www\\.)?([^/]+)'). 5) Quoting: use single quotes for string literals; backtick only the table reference; do not backtick column names. 6) Results: select only needed columns; include linkedin_url when requested; add ORDER BY current_employee_estimate DESC NULLS LAST when ranking by employees; default LIMIT 50 unless the user asks for more. 7) Employees: filter on current_employee_estimate; only use total_employee_estimate if explicitly requested. 8) Fallback (only if SEARCH() is unavailable in the execution environment): replace SEARCH(name, '{company_name}') with REGEXP_CONTAINS(LOWER(name), LOWER('{company_name}')) and SEARCH(locality, '{location_name}') with REGEXP_CONTAINS(LOWER(locality), LOWER('{location_name}')). 9) Do not invent JOINs, project IDs, policies, or additional conditions. Query assembly flow: normalize inputs (trim; for domain, lowercase and strip protocol/leading 'www.'), choose the primary key, build WHERE (SEARCH on name/locality; exact match on domain), optionally add an employee threshold, select columns, add ORDER BY and LIMIT. Templates: A) Search by company name: SELECT name, domain, locality, country, linkedin_url, current_employee_estimate, total_employee_estimate FROM `company_dataset.company` WHERE SEARCH(name, '{company_name}') ORDER BY current_employee_estimate DESC NULLS LAST LIMIT 50; B) Search by domain: SELECT name, domain, locality, country, linkedin_url, current_employee_estimate, total_employee_estimate FROM `company_dataset.company` WHERE LOWER(domain) = LOWER('{domain}') LIMIT 50; C) Search by location (locality filter): SELECT name, domain, locality, country, linkedin_url, current_employee_estimate, total_employee_estimate FROM `company_dataset.company` WHERE SEARCH(locality, '{location_name}') ORDER BY current_employee_estimate DESC NULLS LAST LIMIT 50; D) Name + location: SELECT name, domain, locality, country, linkedin_url, current_employee_estimate, total_employee_estimate FROM `company_dataset.company` WHERE SEARCH(name, '{company_name}') AND SEARCH(locality, '{location_name}') LIMIT 50; E) Name + employee threshold: SELECT name, domain, locality, country, linkedin_url, current_employee_estimate FROM `company_dataset.company` WHERE SEARCH(name, '{company_name}') AND current_employee_estimate > {min_employees} ORDER BY current_employee_estimate DESC NULLS LAST LIMIT 50. Do: keep queries minimal and literal; use SEARCH for name/locality; match domain exactly; include linkedin_url if asked. Don’t: add industry/size_range filters unless asked; don’t add the word 'company' to names; don’t invent joins, project IDs, or extra conditions."

}

}

}

}

}

query_instructions must be valid JSON — a single string with no special formatting.

Once configured, this description enables rich querying by the Assistant without requiring the user to write SQL or understand the table structure.

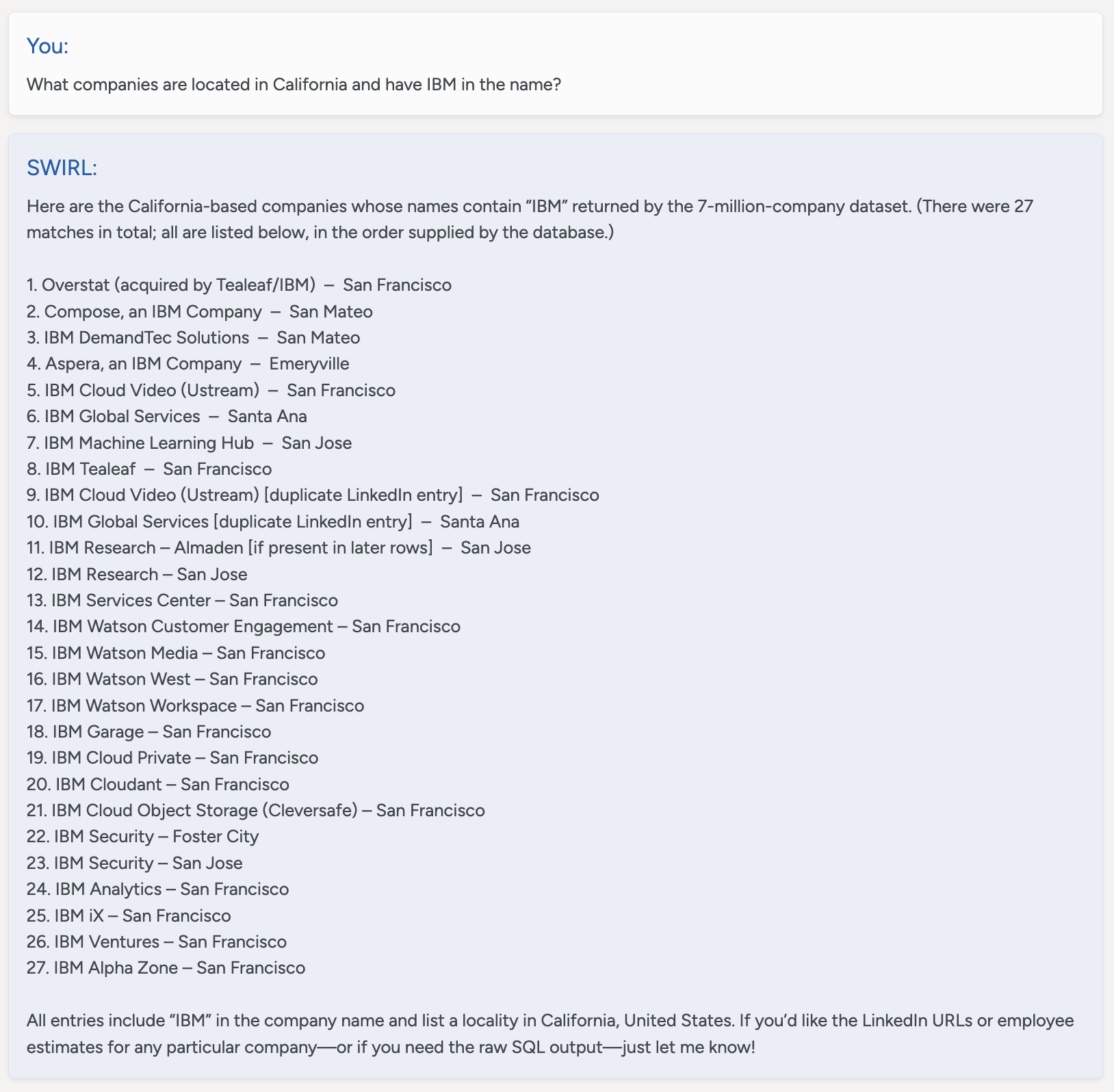

You:

What companies are located in California and have IBM in the name?

SWIRL:

Here are the California-based companies whose names contain “IBM” returned by the 7-million-company dataset. (There were 27 matches in total; all are listed below, in the order supplied by the database.)

1. Overstat (acquired by Tealeaf/IBM) – San Francisco

2. Compose, an IBM Company – San Mateo

3. IBM DemandTec Solutions – San Mateo

4. Aspera, an IBM Company – Emeryville

5. IBM Cloud Video (Ustream) – San Francisco

6. IBM Global Services – Santa Ana

7. IBM Machine Learning Hub – San Jose

8. IBM Tealeaf – San Francisco

9. IBM Cloud Video (Ustream) [duplicate LinkedIn entry] – San Francisco

10. IBM Global Services [duplicate LinkedIn entry] – Santa Ana

11. IBM Research – Almaden [if present in later rows] – San Jose

12. IBM Research – San Jose

13. IBM Services Center – San Francisco

14. IBM Watson Customer Engagement – San Francisco

15. IBM Watson Media – San Francisco

16. IBM Watson West – San Francisco

17. IBM Watson Workspace – San Francisco

18. IBM Garage – San Francisco

19. IBM Cloud Private – San Francisco

20. IBM Cloudant – San Francisco

21. IBM Cloud Object Storage (Cleversafe) – San Francisco

22. IBM Security – Foster City

23. IBM Security – San Jose

24. IBM Analytics – San Francisco

25. IBM iX – San Francisco

26. IBM Ventures – San Francisco

27. IBM Alpha Zone – San Francisco

All entries include “IBM” in the company name and list a locality in California, United States. If you’d like the LinkedIn URLs or employee estimates for any particular company—or if you need the raw SQL output—just let me know!

LLM Requirements

SWIRL AI Search Assistant expects AI providers for the RAG and Chat roles to support:

- Chat history in reverse chronological order, following the format used by the OpenAI Chat Completions API.

- Prompt size of at least 3K tokens per message, with 6K+ preferred.

- Mini models may not work correctly.